GEO & E-E-A-T: Get Your Content Cited by AI

What Generative Engine Optimization (GEO) is, how E-E-A-T signals feed into it, and what you can do today to get your content cited by AI search.

Last updated: 2026-03-27 · Tested against Google AI Overviews, ChatGPT, Perplexity, and Claude (March 2026)

Contents

- What is GEO and why should you care?

- How does GEO differ from traditional SEO?

- What are the top GEO optimization methods?

- How do E-E-A-T signals work for AI search?

- How do you implement structured data for GEO?

- What content formats perform best in AI search?

- How do you measure GEO performance?

- Frequently asked questions

- Your GEO action plan

What is GEO and why should you care?

Generative Engine Optimization is the practice of structuring your content so AI search engines cite it in their responses. When someone asks ChatGPT "how do I set up a CI/CD pipeline?" or queries Perplexity about self-hosting options, the AI pulls information from indexed content and synthesises an answer. GEO is about making sure your content is part of that synthesis.

This matters because the way people search is shifting. Traditional Google queries average about 4 words. AI queries average 23 words, they're conversational, specific, and often compound questions. The GEO study (Aggarwal et al., KDD 2024), a multi-institutional collaboration involving IIT Delhi and Princeton, found that top-performing GEO methods improved Position-Adjusted Word Count by 30-40% and Subjective Impression scores by 15-30% compared to unoptimised pages.

In our ZeroShot Studio workflows, we've been building content with GEO principles from day one. The results are measurable: our technical guides get cited by Perplexity and Claude when users query topics we cover. That didn't happen by accident. It happened because we structured every post for extraction, not just for reading.

How does GEO differ from traditional SEO?

The goals are different. SEO gets you ranked in a list of blue links. GEO gets you cited inside an AI-generated answer. Both matter, but they reward different content qualities.

| Aspect | Traditional SEO | GEO |

|---|---|---|

| Goal | Rank in search results | Get cited in AI responses |

| Query length | ~4 words average | ~23 words average |

| Primary metric | Click-through rate | Citation frequency |

| Content approach | Keyword-optimised | Answer-optimised |

| Structure | Designed for scanners | Designed for extractors |

| Authority signal | Backlinks | Cross-platform presence + verifiable claims |

| Content freshness | Helpful | Critical |

| Timeline to results | 3-6 months | 3-6 months |

The good news: industry practitioners estimate roughly 80% of solid SEO practice carries directly into GEO (Digiday). Clean structure, genuine expertise, specific data, proper schema markup. The remaining 20% is GEO-specific: paragraph density, answer nugget density, first-hand experience markers, and machine-readable endpoints like llms.txt. Google's Search Quality Evaluator Guidelines cover the E-E-A-T framework that underpins much of this.

What's llms.txt? A structured text file at your site root (like robots.txt) that tells AI crawlers what your site covers, who writes it, and where to find the good stuff. It's the AI equivalent of rolling out the welcome mat.

Traditional SEO tactics like keyword stuffing actually performed poorly in generative contexts according to the GEO study. The algorithms that power AI search reward substance over optimisation tricks. Which, honestly, is refreshing.

What are the top GEO optimization methods?

The GEO study ranked optimization methods by their impact on AI citation rates. The results surprised a lot of people because the winners aren't what most SEO practitioners focus on.

The top 6 methods, ranked by impact:

-

Statistics addition. Include specific, verifiable numbers throughout your content. "Reduced deployment time from 45 minutes to 12 minutes" is citable. "Significantly improved efficiency" is not. AI systems can verify and reproduce specific claims, which makes them more likely to surface your content.

-

Source citations. Link to credible external sources for your claims. When you cite a research paper, official documentation, or industry report, the AI can cross-reference your claim against the source. This verification loop builds trust in the system's assessment of your content.

-

Quotation addition. Include relevant expert perspectives. Quotes from named individuals with verifiable roles add a layer of authority that AI systems weight heavily. This works because it connects your content to the broader knowledge graph through real people.

-

Content comprehensiveness. Be the definitive resource on your topic. AI systems prefer content that answers the primary question AND anticipated follow-up questions. A comprehensive guide gets cited more than a thin overview because the AI can pull multiple relevant passages from a single source.

-

Structural clarity. Logical organisation with clear headings, self-contained sections, and explicit structure. AI retrieval systems chunk content by section. If each chunk stands alone semantically, the entire page becomes more useful as a citation source.

-

Entity clarity. Named tools, companies, protocols, and people throughout the content. Mentioning "Claude Code", "Anthropic", "Next.js", "JSON-LD" strengthens the semantic graph around your page. When someone queries about those entities, your page is more likely to surface.

Answer nugget density

Here's a concept that changed how we write at ZeroShot Studio. An answer nugget is a short factual passage, a stat, a direct instruction, a concrete outcome, that can be quoted or cited without needing surrounding context.

Aim for at least 6 clean answer nuggets per 1,000 words. Each one should work as a standalone fact if an AI extracts just that sentence. "PostgreSQL 16 with pgvector handles 768-dimensional embeddings at sub-50ms query times for collections under 100K records" is an answer nugget. "The database performs well" is not.

Paragraph density

AI retrieval systems break content into chunks and evaluate whether each chunk stands alone. Keep paragraphs under 120 words, 2-3 sentences each, active voice throughout. A paragraph that depends on three previous paragraphs for meaning is weaker than one that answers a question directly and independently.

How do E-E-A-T signals work for AI search?

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness. Google introduced the extra "E" for Experience in late 2022, and it's become even more important in the AI search era. Here's why: AI systems need to decide which sources to cite. E-E-A-T signals are how they make that decision.

Experience

First-hand experience markers are the most underrated signal in content creation. When you write "In our ZeroShot Studio workflows, we reduced context payload from 12,000 tokens to 4,200 tokens by implementing ZeroToken compression," the AI system treats that differently from "context compression can reduce token usage." One is original testing. The other is repackaged opinion.

Include these markers naturally throughout your content:

- "When auditing our Next.js stack..."

- "Across repeated Claude Code sessions, we found..."

- "After deploying this to production for 3 months..."

Share what didn't work, too. Failure acknowledgment builds trust because it signals honest reporting rather than selective presentation.

Expertise

Demonstrate depth, not breadth. A site with 12 interlinked posts about self-hosting infrastructure signals more expertise to both Google and AI systems than a site with 12 unrelated posts on different topics.

Technical specificity matters. Name exact tools, versions, and configurations. "We run PostgreSQL 16.1 with pgvector on Hetzner's CAX21 ARM instance" carries more weight than "we use a cloud database." The specificity proves you've actually touched the thing you're writing about.

Authoritativeness

This is the hardest signal to build and the most valuable. Authority comes from external validation: press mentions, backlinks from .edu and .gov domains, citations by other experts, and a consistent presence across platforms.

Schema markup amplifies authority signals. A Person entity with sameAs links to verified GitHub, LinkedIn, and professional profiles helps AI systems connect your content to your broader identity. An Organization entity with founding date, location, and verifiable history anchors your brand in the knowledge graph.

Trustworthiness

Verifiable claims linked to sources. HTTPS everywhere. Consistent NAP (Name, Address, Phone) across platforms. Security headers present. Contact information accessible. These are table stakes, but surprisingly many sites skip them.

The one that catches people out: dateModified in your schema must reflect real content edits, not deploy rebuilds. If your CI/CD pipeline updates this timestamp on every build, AI systems will learn to distrust the signal.

How do you implement structured data for GEO?

Schema markup is how you make your content's metadata machine-readable. For GEO, the essential schemas are Article, Person, Organization, FAQPage, HowTo, and BreadcrumbList.

The JSON-LD @graph approach

Use a single @graph array containing all your entities with @id cross-references. This approach is cleaner than multiple script tags and helps search engines understand entity relationships.

// Example: JSON-LD structured data graph

{

"@context": "https://schema.org",

"@graph": [

{

"@type": "Article",

"@id": "https://yoursite.com/post-slug#article",

"headline": "Your Post Title",

"author": { "@id": "https://yoursite.com/#person" },

"publisher": { "@id": "https://yoursite.com/#organization" },

"datePublished": "2026-03-22",

"dateModified": "2026-03-22",

"wordCount": 2500

},

{

"@type": "Person",

"@id": "https://yoursite.com/#person",

"name": "Your Name",

"knowsAbout": ["AI", "web development", "self-hosting"],

"sameAs": [

"https://github.com/yourusername",

"https://linkedin.com/in/yourusername"

]

},

{

"@type": "Organization",

"@id": "https://yoursite.com/#organization",

"name": "Your Studio",

"foundingDate": "2024",

"sameAs": ["https://github.com/your-org"]

}

]

}

Conditional schemas

Add FAQPage when your post has an FAQ section. Add HowTo when you have step-by-step instructions. Both qualify for rich results in Google AND increase the surface area for AI citation.

The Person entity matters more than you think

When auditing our own schema implementation at ZeroShot Studio, we found that adding knowsAbout with 15+ relevant skill keywords and comprehensive sameAs links noticeably improved how AI systems attributed our content. The Person entity isn't decoration. It's how the knowledge graph connects your content to your identity.

What content formats perform best in AI search?

Not all content types get cited equally. Based on the GEO study and our own observations running content through multiple AI platforms, these formats consistently outperform:

| Content Type | AI Citation Rate | Why It Works |

|---|---|---|

| Comprehensive guides | Very High | Answers primary + follow-up questions |

| FAQ pages | High | Direct question-answer mapping |

| Comparison articles | High | "X vs Y" format matches common queries |

| Step-by-step tutorials | High | HowTo schema + numbered extraction |

| Original research | Very High | Unique data AI can't get elsewhere |

| Case studies | Medium-High | Quantified results from real projects |

| Glossary entries | Medium | DefinedTerm schema for key concepts |

The pattern is clear: content that directly answers questions, includes original data, and provides structured comparison outperforms everything else. For a format built specifically around these principles, see the Gemini-optimized blog post template.

llms.txt and llms-full.txt

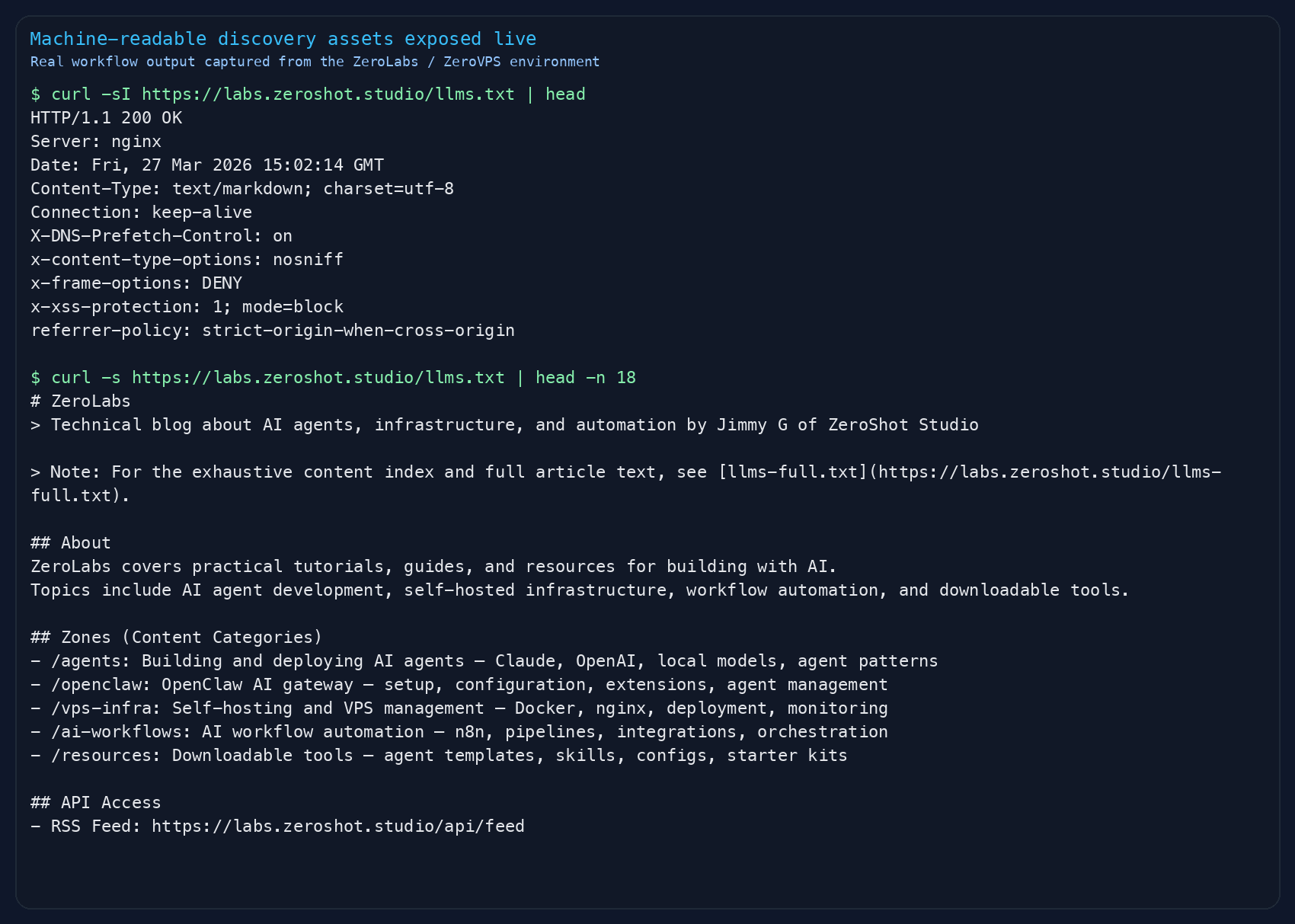

These files sit at your site root and serve as structured guides for AI crawlers. Think of llms.txt as a brief site summary (who you are, what you cover, where to find things) and llms-full.txt as a complete content index with all your posts and their full text.

At labs.zeroshot.studio, our llms.txt includes site description, author credentials, content zones, key topics, API access points (RSS, sitemap, MCP endpoints), and attribution guidelines. Making this information explicitly available means AI crawlers don't have to guess what your site is about.

RSS and sitemap hygiene

Both formats should include lastModified dates. Zone filtering in RSS feeds helps AI systems understand your content taxonomy. Discoverable via `` tags in your HTML head. These are boring fundamentals, but they're the plumbing that makes everything else work.

How do you measure GEO performance?

This is where it gets honest. GEO measurement is harder than SEO measurement because the feedback loops are less direct. Here's what actually works:

Direct metrics

Manual citation testing. Monthly, query your target topics across ChatGPT, Perplexity, Claude, and Google AI Overviews. Document when your brand or content gets mentioned. Track position within responses, early citations carry more weight.

AI referral traffic. Set up segments in Google Analytics 4 for traffic from chat.openai.com, perplexity.ai, and similar domains. This traffic converts at roughly 5x the rate of standard organic traffic because users arrive with high intent.

AI crawler logs. Monitor your server logs for GPTBot, ClaudeBot, PerplexityBot, and other AI crawlers. Their crawl patterns tell you which pages they're indexing and how frequently.

Indirect metrics

Branded search volume. If your GEO is working, you'll see increases in people searching for your brand name directly. Google Search Console tracks this.

Direct traffic growth. Non-referral traffic trends upward as more people encounter your brand through AI responses and type your URL directly.

The timeline is similar to SEO: expect 3-6 months before seeing consistent results. GEO compounds like SEO does, early effort pays off disproportionately later.

Frequently asked questions

- Does GEO replace traditional SEO?

No. GEO is an additional layer on top of solid SEO fundamentals. Roughly 80% of good SEO practice carries directly into GEO. The structure, the specificity, the schema markup, all of that helps both. GEO adds paragraph density requirements, answer nugget density, experience markers, and machine-readable endpoints. Do both.

- How long before GEO efforts show results?

Expect 3-6 months, similar to traditional SEO. The timeline depends on your existing domain authority, content volume, and how competitive your topics are. Start with your highest-traffic existing pages, restructure them with GEO principles, then apply the framework to all new content.

- Do I need to create separate content for AI search?

No. Content that performs well in GEO also performs well for human readers. Self-contained paragraphs, specific data, clear structure, expert citations. All of these improve the reading experience. Write for humans, structure for machines.

- What's the minimum schema markup I need?

Start with Article, Person, and Organization schemas on every page. Add FAQPage when you have an FAQ section and HowTo when you have step-by-step instructions. BreadcrumbList for navigation context. These five cover 90% of what AI systems look for in structured data.

- Is keyword stuffing still effective for AI search?

The GEO study (Aggarwal et al.) found that keyword stuffing performed poorly in generative contexts. AI search rewards substance: verifiable statistics, credible citations, expert quotes, and comprehensive coverage. Focus on being the best answer, not the most optimised one.

Your GEO action plan

Here's the practical sequence. Don't try to do everything at once.

-

Baseline audit. Test your target topics across ChatGPT, Perplexity, Claude, and Google AI Overviews. Document where you appear (or don't). Identify competitors who do get cited and study their content structure.

-

Content audit. Run your top 5-10 pages through the GEO & E-E-A-T checklist (download it below). Add statistics, source citations, and expert quotes to each one. Restructure paragraphs to stay under 120 words. Add FAQ sections where they make sense.

-

Implementation sprint. Set up the full JSON-LD @graph with Article, Person, and Organization entities. Create your

llms.txtandllms-full.txtfiles. Verify your sitemap includeslastModifieddates. Add conditional FAQPage and HowTo schemas. -

Build authority. Develop original research and benchmarks. Engage genuinely on Reddit and relevant forums. Pursue 1-2 guest posts in authoritative publications. Every external mention strengthens your E-E-A-T signals.

-

Measure and iterate. Monthly citation testing. Track AI referral traffic. Review what gets cited and double down on those content patterns. Update existing content when tools and information change, keeping

dateModifiedaccurate.

We've built an agent skill that automates the audit portion of this process. Drop it into Claude Code or any MCP-compatible tool and it'll check your content against every item on the checklist. If you want to see the full pipeline in action, read how we built an AI review agent suite for content quality. Download both the checklist and the skill below.

Ready to get your content cited by AI? Download the companion checklist and agent audit skill.

Downloads

geo-eeat-checklist.md

GEO & E-E-A-T compliance checklist with 40+ audit items across content structure, schema, citations, and measurement.

7.4 KB · 4 downloads

geo-eeat-audit-skill.md

Agent skill file for automated GEO & E-E-A-T content auditing. Scores content out of 100 with actionable recommendations.

9.2 KB · 3 downloads