Trace AI Content Handoffs Without Losing Proof Assets

Trace AI content handoffs with one run ID, a manifest, and proof metadata that keep draft, review, and publish states aligned.

Last updated: 2026-04-05 · Tested against the current ZeroContentPipeline workspace docs plus the linked OpenTelemetry, GitHub Actions, Playwright, W3C PROV, and MCP documentation

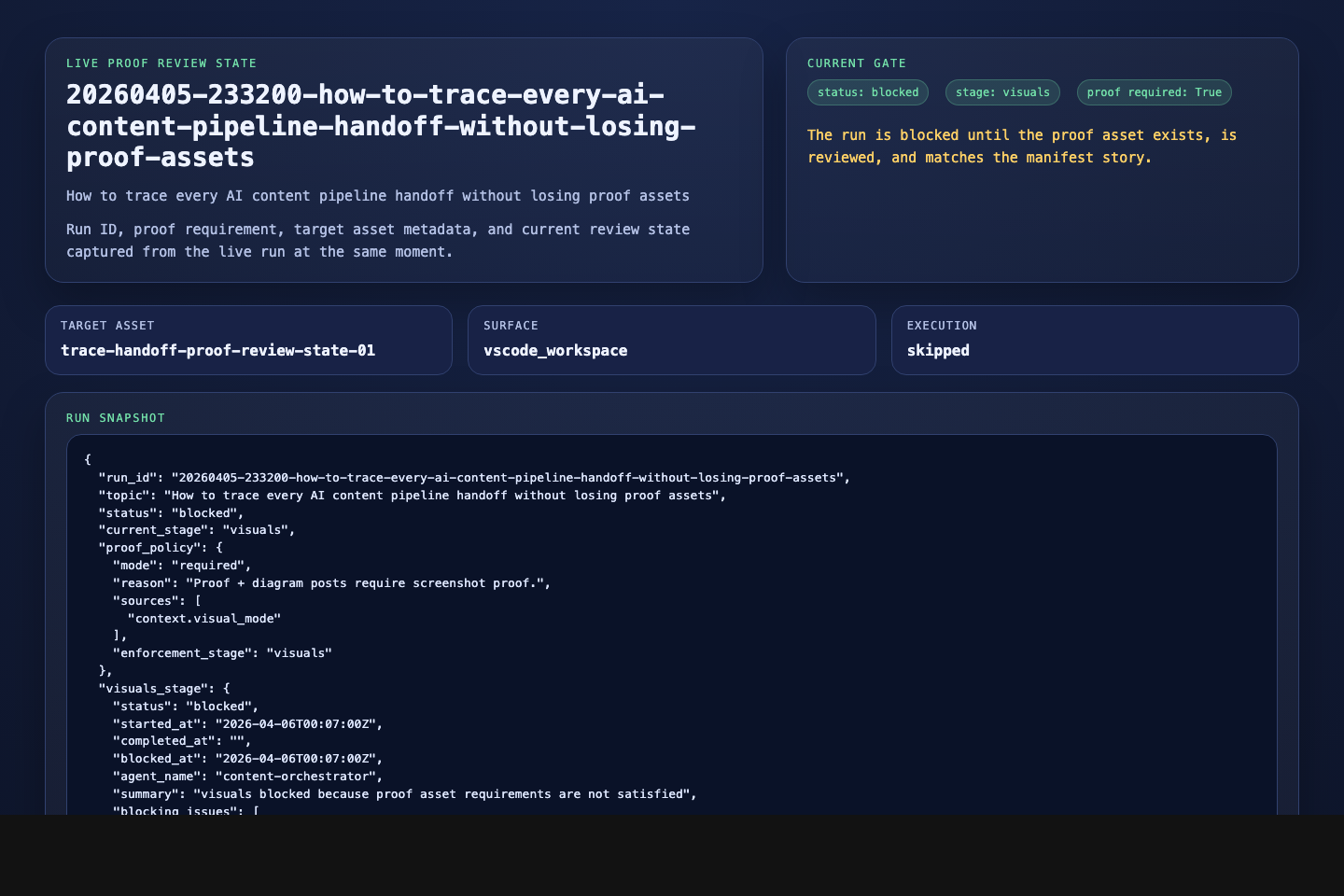

In this run, content/ holds the live draft and runs/ holds the review state. I have watched drift show up when a rename lands in only one place: the asset path shifts, the run ID falls out of sync, and the sentence no longer matches the evidence beside it.

The fix is smaller than most teams expect. You do not need a giant observability stack to keep evidence intact. You need one run spine, explicit handoff records, and evidence that says exactly what it proves.

%% File: content/20260405-233200-how-to-trace-every-ai-content-pipeline-handoff-without-losing-proof-assets/visuals/diagrams/mermaid/trace-handoff-evidence-chain-01.mmd

flowchart LR

A["Run ID created"] --> B["Draft, manifest, and assets carry same ID"]

B --> C["Each handoff records entity, activity, and agent"]

C --> D["Proof asset stored with path, caption, and digest"]

D --> E["Reviewer checks sentence, manifest, and asset together"]

E --> F["Publish waits until the chain agrees"]What counts as a handoff in an AI content pipeline?

In practice, I treat a handoff as any boundary where the next step can lose context, proof, or approval state. Human to model is one. Model to tool is another. Tool to workflow job is another. Reviewer to publisher counts too.

OpenTelemetry describes traces as collections of spans, with SpanContext propagated across boundaries so related work can still be connected later (OpenTelemetry Overview). You do not need full tracing to borrow the useful bit: one stable identifier that survives the boundary.

The W3C PROV overview models provenance through entities, activities, and agents, and says the PROV family is meant to support interoperable provenance interchange, reproducibility, and versioning (W3C PROV Overview). That maps neatly onto content work. A draft is an entity. A review pass is an activity. A model, tool, or editor is an agent.

The shape is already there in this workspace. content/ holds the active draft and runs/ holds the run-local state, so drift becomes visible instead of hidden. The current run manifest separates visual_mode, proof_required, safety_review, and asset_plan, which only helps if the draft and proof file keep the same run ID.

GitHub Actions and Playwright are just the concrete examples here. The pattern still works with other runners and capture tools, as long as the handoff record names the same run and the proof object stays tied to the claim.

How do you trace AI content pipeline handoffs without losing proof assets?

I use one contiguous chain from the first prompt to the publish gate.

- Mint one run ID before anything branches. Use the same identifier on the brief, draft, manifest, capture, audit, and artifact.

- Record each handoff as entity, activity, and agent. Say what moved, what changed, and who or what did the work.

- Separate the claim from the proof. Keep the article claim in prose and the evidence in the manifest and asset metadata.

- Store file integrity with the artifact. GitHub Actions can move outputs between jobs, and artifact download validates the digest (Workflow artifacts, Store and share data with workflow artifacts).

- Choose the smallest truthful capture. Playwright supports full-page and element screenshots (Playwright Screenshots).

- Gate publish on agreement. If the sentence, manifest, and proof disagree, stop.

The hard rule A handoff is not done when a file exists. It is done when the next step can verify what the file proves, who produced it, and which run it belongs to.

When is a manifest enough, and when do you need more proof?

A manifest is the routing layer. It tells the system where the asset lives, which run owns it, what class of output it is, and whether it is safe to move forward. That is good for audits and machine checks.

It is not always enough as evidence. If the article claims a visible workflow state, a review decision, or a specific execution result, you need something more literal than metadata. A screenshot, report, or stored artifact gives the reviewer a real object to inspect.

Use the manifest on its own when the handoff is mostly administrative: a draft moved into review, a bundle snapshot written to the run folder, an archived file landing where the next job expects it.

Use additional proof when a sentence would fall apart under challenge. If you say an audit passed, the report or capture is stronger than a manifest field alone. If you say a visual was reviewed and sanitized, the proof should show that exact state instead of a nearby screen and a hopeful caption.

I keep the proof capture tight: one sanitized frame that shows the run ID, the review state, and the matching manifest record in the same moment.

This is where MCP's split between resources and tools becomes useful. Resources are data exposed to clients, and tools are callable actions (MCP Resources, MCP Tools).

In a content pipeline, that distinction matters. Read-only context handoffs and action handoffs should not blur into one vague story about "the agent did it."

When should you use a screenshot, a log, a diagram, or a stored artifact?

Pick the lightest thing that can survive review.

| If you need to prove... | Best proof surface | Why it holds up |

|---|---|---|

| The route through a workflow | Mermaid diagram | It explains the chain without pretending to be execution evidence |

| A visible UI or review state | Screenshot | It shows the literal state the sentence depends on |

| One badge, panel, or result | Element screenshot | It keeps the proof narrow and readable |

| A packaged output or generated report | Stored artifact | It preserves the actual output for later inspection |

| A machine decision trail | Log excerpt or report | It shows event history with less visual noise |

The trade-off is simple. Diagrams explain. Evidence verifies. Reports preserve. Logs explain failure. Mix those jobs together and review gets muddy fast.

The smallest honest capture is usually the best reviewer surface. It is easier to inspect, easier to caption, and harder to oversell. That is why the proof-first rule helps: one image proves one state, one report proves one machine outcome, and one diagram explains one path through the system.

Common errors and gotchas (troubleshooting)

The first warning sign is identifier drift. The draft uses one run ID, the asset folder uses another, or the review note stops naming the same object as the manifest. From there, every later step gets more interpretive than it should be.

The second warning sign is proof inflation. Someone captures a whole page to look safe, but the actual claim depends on one badge in a corner. That makes the proof harder to read and easier to dispute.

The third is action and context collapsing into the same blob. If a tool both fetched read-only context and changed workflow state, the handoff record should say that plainly. Otherwise the reviewer is left inferring whether they are looking at evidence, execution, or both.

For adjacent examples, How to Debug ZeroContentPipeline Proofs covers review drift, You Don't Need an AI Agent covers the workflow getting too magical, and How Claude Published Directly to Labs via MCP covers the final boundary where traced work becomes a live post.

What are the most common questions?

What counts as a handoff in an AI content pipeline?

Any boundary where the next step could lose identifiers, context, proof, or approval state counts as a handoff. That includes human-to-model, model-to-tool, tool-to-workflow, workflow-to-review, and review-to-publish boundaries.

How do I avoid losing proof assets when a step moves to another tool or person?

Carry one run ID everywhere, keep the manifest entry next to the asset metadata, and make the caption describe the exact sentence the asset supports. If the next operator has to guess what the file proves, the handoff was too loose.

Is a manifest enough, or do I also need traces, screenshots, and digests?

A manifest is the index, not the whole evidence chain. Use it to route and classify work, then add traces or step records for continuity, screenshots for visible states, and digests or archived outputs when file integrity matters.

When should I use a screenshot versus a log, a diagram, or a stored artifact?

Use screenshots for visible states, logs for event history, diagrams for route explanation, and stored artifacts for outputs you may need later. Many workflows need one explanatory diagram plus one literal proof object, not a gallery of decorative captures.

Start with one sentence that matters. Trace it from prompt to publish candidate. Make the run ID, manifest entry, and proof object agree, then expand that pattern to the rest of the pipeline.

That is not flashy, but it gives you a chain you can defend when someone asks why the workflow believes what it believes.

Want the broader system around this? Read the rest of the AI Workflows cluster, then follow the links above into review drift, agent boundaries, and MCP-based publishing.